News release

From:

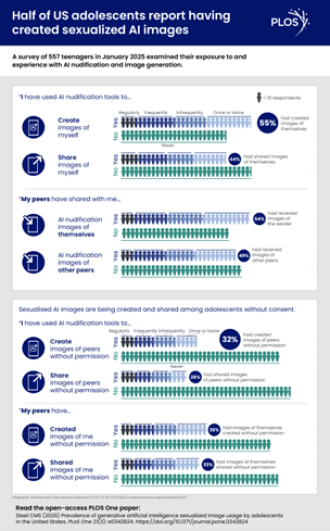

Half of surveyed U.S. teens have used AI to create sexualized images

1/3 teens also reported being victims of these nudification tools, having had their image created and shared non-consensually

In a survey study of U.S. teens, more than half (55.3%) reported that they had created at least one image using nudification tools, which use generative artificial intelligence (GenAI) to show what an individual may look like without clothing. Chad Steel of George Mason University, Virginia, U.S., presents these findings in the open-access journal PLOS One on March 18, 2026.

Prior research has suggested that the creation and distribution of sexualized images—whether or not GenAI is involved—have become normalized among U.S. adolescents. GenAI can be used for sexual exploitation, such as the non-consensual creation of sexualized images of individuals. Reports of GenAI misuse by adolescents have risen, with victims experiencing consequences similar to the impacts of other forms of child sexual exploitation material, such as a sense of dehumanization and permanent life disruptions.

However, the overall prevalence among adolescents of GenAI usage in regards to sexualized images has been unclear. To help deepen understanding, Steel analyzed online survey results from 557 English-speaking U.S. residents aged 13 to 17. The survey was anonymous and conducted with parental consent. It included questions about participants’ experiences with creating, sharing, and viewing of sexualized GenAI images—both of themselves or others, and consensually or non-consensually.

Of the teens surveyed, 55.3 percent reported that they had used nudification tools to create at least one image of themselves or others, and 54.4 percent reported that they had received such an image. In addition, 36.3 percent reported that at least one sexualized GenAI image of themselves had been created by someone else without their consent, and 33.2 percent reported that at least one sexualized GenAI image of themselves had been non-consensually distributed.

Steel found that these results were largely similar across demographic categories, including age. Although usage was widespread for both male and female participants, male participants reported higher rates of creating and distributing sexualized GenAI images of themselves and others, whether consensually or non-consensually.

These findings could help inform legal policies and educational efforts to promote safe use of GenAI tools. This was an exploratory study, and future research could provide further insights, such whether similar results hold true for adolescents in other countries.

Steel adds: “Teens are no longer just digital natives but AI-natives. ‘Nudification’ and GenAI apps are their new ‘sexting’, only with more challenging issues surrounding consent.”

Multimedia

International

International